Parsing the Web

For as long as the web has existed, we have been writing parsers to extract and categorize its data. Today, this effort has scaled into a massive wave of bot traffic. Whether it is search engines like Googlebot, AI agents feeding LLMs, or specialized scrapers, the goal remains the same: transforming raw HTML into structured, actionable information.

While the specific requirements vary by industry, the fundamental need for data sourcing is universal. E-commerce platforms track competitor pricing; AI systems extract plain text to train models; outreach tools scrape contact details and addresses. At scale, the bottleneck isn’t the data itself-it’s how efficiently your parser can chew through megabytes of potentially broken HTML.

Implementation Approaches

The requirement for data extraction has led to various tooling options. These range from managed services to the low-level libraries used in custom development.

Python is a common choice for this work, supported by a mature set of libraries for building functional parsers. For systems requiring higher concurrency, Go is an effective alternative. It allows for the development of parsers that manage large datasets with efficient resource utilization.

Technical Context

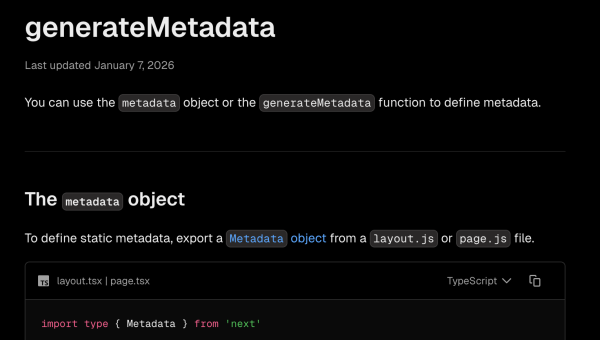

EdgeComet is implemented in Go. Within our rendering service, we maintain a module for extracting page titles and metadata. While these are standard operations, the choice of implementation method involved specific technical trade-offs.

The initial version utilized regular expressions for their low overhead. This decision sparked a heated discussion within the team. Some members strongly disagreed with using regex, advocating for the goquery library to improve robustness. Their primary concern was the handling of malformed HTML, while my focus remained on performance: regex should be significantly faster than parsing a full page and building a DOM tree in memory.

The Regex Implementation

Using regex seems straightforward. After rendering a page in Headless Chrome, we have the full page content in a string. I simply fed it into a couple of regex calls.

The catch is that modern webpages can be huge. We aren’t talking about tens of kilobytes anymore; I often see pages weighing in at a couple of megabytes.

package extractor

import (

"regexp"

"strings"

)

// Patterns for HTML tag and attribute extraction

var (

titlePattern = regexp.MustCompile(`(?is)<title[^>]*>(.*?)</title>`)

metaTagPattern = regexp.MustCompile(`(?i)<meta\s+[^>]*>`)

nameAttrPattern = regexp.MustCompile(`(?i)\bname\s*=\s*(?:"([^"]*)"|'([^']*)'|([^\s>]+))`)

contentAttrPattern = regexp.MustCompile(`(?i)\bcontent\s*=\s*(?:"([^"]*)"|'([^']*)'|([^\s>]+))`)

blockingPattern = regexp.MustCompile(`(?i)\b(noindex|none)\b`)

)

// ExtractTitle returns the page title, truncated to 200 characters

func ExtractTitle(html string) string {

matches := titlePattern.FindStringSubmatch(html)

if matches == nil || len(matches) < 2 {

return ""

}

title := strings.TrimSpace(matches[1])

if runes := []rune(title); len(runes) > 200 {

return string(runes[:200])

}

return title

}

// IsNoindex checks if page is blocked by meta robots or googlebot tags

func IsNoindex(html string) bool {

metaTags := metaTagPattern.FindAllString(html, -1)

var robotsContent, googlebotContent string

for _, tag := range metaTags {

name := strings.ToLower(extractAttr(tag, nameAttrPattern))

content := extractAttr(tag, contentAttrPattern)

switch name {

case "robots":

robotsContent = content

case "googlebot":

googlebotContent = content

}

}

// Googlebot directive takes priority over robots

if googlebotContent != "" {

return blockingPattern.MatchString(googlebotContent)

}

return blockingPattern.MatchString(robotsContent)

}

func extractAttr(tag string, pattern *regexp.Regexp) string {

matches := pattern.FindStringSubmatch(tag)

for i := 1; i < len(matches); i++ {

if matches[i] != "" {

return matches[i]

}

}

return ""

}

// Usage:

// title := ExtractTitle(html)

// blocked := IsNoindex(html)One of the biggest issues with regex parsing is fragility. The pages on the internet, do not follow strict standards or best practices. In the wild, you will encounter edge cases you can’t even imagine.

A <title> tag might contain 5 MB of data. A meta tag might be unclosed and bleed into the <body>. These scenarios make regex complicated to maintain. I found myself writing not just one, but a chain of regex patterns just to extract a single piece of text while handling various edge cases.

We all remember the old saying: “If you have a problem and you decide to use regex, now you have two problems.”

GoQuery

goquery is a staple in the Go ecosystem. It’s more than 6 years old and enjoys massive popularity. Essentially, it’s a wrapper around the Go native library net/html.

It implements a tokenizer and parser under the hood. Basically, it reads the HTML document, parses it into tokens, and builds a full tree of the document in memory. If you are thinking about processing thousands of pages per second, that sounds like a huge overhead-parsing every document, building a tree, and allocating all those objects.

But simultaneously, it makes life much easier. I don’t need to care about broken tags, non-standard compliance, or weird nesting. The library offers a clean interface similar to jQuery, making data extraction easy and frankly, quite enjoyable.

package extractor

import (

"io"

"net/url"

"regexp"

"strings"

"github.com/PuerkitoBio/goquery"

)

var blockingPattern = regexp.MustCompile(`(?i)\b(noindex|none)\b`)

// Document wraps goquery.Document for metadata extraction

type Document struct {

doc *goquery.Document

}

// NewDocument parses HTML from reader and returns a Document

func NewDocument(r io.Reader) (*Document, error) {

doc, err := goquery.NewDocumentFromReader(r)

if err != nil {

return nil, err

}

return &Document{doc: doc}, nil

}

// Title returns the page title, truncated to 200 characters

func (d *Document) Title() string {

title := strings.TrimSpace(d.doc.Find("head title").First().Text())

if runes := []rune(title); len(runes) > 200 {

return string(runes[:200])

}

return title

}

// IsNoindex checks if page is blocked by meta robots/googlebot tags

func (d *Document) IsNoindex() bool {

var googlebotContent, robotsContent string

d.doc.Find("head meta").Each(func(_ int, s *goquery.Selection) {

name := strings.ToLower(s.AttrOr("name", ""))

content := s.AttrOr("content", "")

if name == "googlebot" {

googlebotContent = content

} else if name == "robots" {

robotsContent = content

}

})

// Googlebot directive takes priority

if googlebotContent != "" {

return blockingPattern.MatchString(googlebotContent)

}

return blockingPattern.MatchString(robotsContent)

}

// Usage:

// doc, err := extractor.NewDocument(strings.NewReader(html))

// if err != nil {

// return err

// }

//

// title := doc.Title()

// blocked := doc.IsNoindex()However, goquery and the net/html library are not a universal solution. In production environments, we encountered scenarios where parsing became problematic, particularly with unusually large <head> sections. This typically occurs when web pages include hundreds of kilobytes of inline JavaScript and CSS directly in the head for “optimization” purposes.

Comparison

After our internal “hot discussion” between the regex and goquery camps, I decided to settle it with testing. The plan was to parse and download some number of different pages to test the implementation and compare which method was actually faster in practice.

I grabbed the top 1000 websites from dataforseo.com and created a simple parser benchmark.

Test Methodology

I built a benchmark tool that:

- Fetches HTML from each website using a Chrome user-agent.

- Extracts metadata (title, meta description, h1/h2 counts, link counts) using three methods:

goquery, regex, and Go’s nativenet/htmlparser. - Measures execution time for each method.

- Also benchmarks script cleaning (removing executable scripts while preserving JSON-LD).

Out of 1000 domains, 673 returned valid HTML responses. The rest failed due to HTTP errors (403 Forbidden), timeouts, or SSL issues. I ran all tests with 15 concurrent workers and a 10-second timeout per request.

Results

Benchmark Results Summary

Performance comparison across 673 websites from top 1000 domains

| Operation | Method | P50 | P90 | P99 | Max |

|---|---|---|---|---|---|

| Full Metadata Extraction (title, description, h1/h2, links) | |||||

| Regex | 1.35ms | 5.28ms | 14.1ms | 36.2ms | |

| net/html | 1.60ms | 5.29ms | 12.8ms | 18.4ms | |

| GoQuery | 1.80ms | 5.82ms | 14.7ms | 22.4ms | |

| Title-Only Extraction (includes parse time) | |||||

| Regex | 8us | 14us | 29us | 4.46ms | |

| net/html | 1.59ms | 5.30ms | 12.7ms | 21.0ms | |

| GoQuery | 1.63ms | 5.52ms | 13.4ms | 18.6ms | |

| Script Cleaning (remove executable, preserve JSON-LD) | |||||

| Regex | 3.89ms | 22.3ms | 82.4ms | 171.2ms | |

| net/html | 1.92ms | 6.24ms | 16.1ms | 50.5ms | |

Test environment: macOS, Go 1.21+, 15 concurrent workers, 10-second HTTP timeout per domain.

327 domains failed due to HTTP errors (403, timeouts, SSL issues), leaving 673 successful measurements.

Title-only extraction includes full parse time for fair comparison – regex is ~200x faster for single-element extraction.

Full Metadata Extraction

For extracting title, description, heading counts, and link counts from HTML:

| Method | P50 | P90 | P99 | Max |

|---|---|---|---|---|

| Regex | 1.35ms | 5.28ms | 14.1ms | 36.2ms |

| net/html | 1.60ms | 5.29ms | 12.8ms | 18.4ms |

| GoQuery | 1.80ms | 5.82ms | 14.7ms | 22.4ms |

Full Metadata Extraction Performance

Time to extract title, description, h1/h2 counts, and links from HTML (673 websites)

Values in milliseconds (ms). Lower is better. Based on 673 successful website fetches from top 1000 domains.

Regex leads at P50 with 1.35ms. The net/html parser is close at 1.60ms, while goquery trails at 1.80ms. However, at P99, net/html shows the most predictable worst-case performance (12.8ms vs 14.1ms for regex).

Title-Only Extraction

When extracting just the title tag:

| Method | P50 | P90 | P99 |

|---|---|---|---|

| Regex | 8μs | 14μs | 29μs |

| net/html | 1.59ms | 5.30ms | 12.7ms |

| GoQuery | 1.63ms | 5.52ms | 13.4ms |

Title-Only Extraction Performance

Time to parse HTML and extract just the title tag (673 websites)

Values in milliseconds (ms). Lower is better. Includes parse time for DOM methods.

Note: DOM parser times include full document parsing. Once parsed, DOM traversal to find the title takes ~0μs-but you still pay the parsing cost upfront.

Regex is ~200x faster for single-element extraction. This is the key insight: regex doesn’t parse the document. It scans the HTML string until it finds the pattern and stops. DOM parsers must tokenize and build the full document structure before any extraction can happen.

For extracting a single element, regex wins decisively. But if you need multiple elements, the DOM parsing cost gets amortized across all extractions-you parse once, then traverse for essentially free.

Script Cleaning

I also tested removing executable scripts while preserving JSON-LD and template scripts:

| Method | P50 | P90 | P99 |

|---|---|---|---|

| Regex | 3.89ms | 22.3ms | 82.4ms |

| net/html | 1.92ms | 6.24ms | 16.1ms |

Script Cleaning Performance

Time to remove executable scripts while preserving JSON-LD and data scripts (673 websites)

Values in milliseconds (ms). Lower is better. Script cleaning involves removing executable scripts while preserving JSON-LD and template scripts.

Here the results flip. The net/html parser is 2x faster at P50 and 5.1x faster at P99. The regex approach shows significant P99 spikes (82.4ms vs 16.1ms) due to backtracking on pages with complex nested scripts or unusual patterns.

Conclusions

Single Element Extraction: Regex Works

For extracting just one tag-a title, a specific meta tag-regex is a reasonable choice. It’s fast, has no dependencies, and the pattern is easy to understand. The 200x speed advantage over DOM parsers for title extraction is real (8 microseconds vs 1.6 milliseconds). Regex doesn’t parse the document; it scans until it finds the pattern and stops. If you’re building a high-throughput crawler that only needs the page title, regex delivers.

Multiple Extractions: net/html Wins

The picture changes when you need several pieces of data from the same page. With net/html (or goquery), you pay the parsing cost once. After that, accessing any element is essentially free-my benchmarks showed ~0 microseconds for title extraction after the DOM was built.

If you need title, description, canonical URL, Open Graph tags, and heading structure from each page, the DOM approach becomes more efficient. The upfront parsing cost amortizes across all extractions, and you avoid running multiple regex patterns over megabytes of HTML.

Developer Experience Matters

goquery wraps net/html with a jQuery-like API. It’s slightly slower than raw net/html, but the code is cleaner:

// GoQuery - readable and maintainable

title := doc.Find("title").Text()

canonical := doc.Find(`link[rel="canonical"]`).AttrOr("href", "")

// vs regex - faster but harder to extend

matches := titleRegex.FindStringSubmatch(html)When throughput isn’t your primary constraint, the maintainability advantage of goquery may outweigh the performance difference. New team members understand doc.Find("title") immediately.

Beyond Speed: Robustness and Maintainability

In practical applications, raw speed isn’t everything. Consider:

- Robustness – DOM parsers handle malformed HTML gracefully. Regex patterns can break on unexpected markup, missing quotes, or nested tags.

- Maintainability – Adding a new field to extract is one line with

goquery, but might require crafting and testing a new regex pattern. - Debugging – When extraction fails, DOM-based code is easier to debug. You can inspect the parsed tree. With regex, you’re staring at pattern matching failures.

- Extraction Reliability – As noted earlier, even

net/htmlhas limits. Extremely large documents or<head>sections filled with massive inline CSS and JS can cause the parser to fail or return inconsistent results.

Our Decision

Initially, I used regex for EdgeComet’s metadata extraction. After internal discussion and running these benchmarks, I decided to switch to net/html.

Why? We extract a variety of data from each page-not just the title, but description, canonical URLs, robots directives, Open Graph tags, and more. EdgeComet works with a huge variety of HTML pages from sources we don’t control and can’t predict. I’ve seen everything from perfectly valid HTML5 to decade-old markup with unclosed tags and invalid nesting.

Robustness and reliability won over raw speed. When the rendering process itself takes 3, 5, or 10 seconds in Headless Chrome, adding 20-50 milliseconds for extraction is negligible. The DOM parser handles edge cases I haven’t even encountered yet, and the code is easier to extend when we need to extract new fields.

The Takeaway

Choose based on your actual requirements:

- One element, maximum speed: Regex

- Multiple elements from same page:

net/htmlorgoquery - Maintainability over raw performance:

goquery - Unknown/messy HTML sources: DOM parsers

The 22% speed difference at P50 might matter at scale, or it might be negligible compared to your network latency. Profile your specific use case rather than following general advice.

If you are dealing with JavaScript rendering challenges and would rather focus on your project tasks, EdgeComet might be the right fit. We’ve built our service to handle these exact scenarios at scale, managing the JS rendering so you don’t have to.