I recently investigated a regression where a client’s page titles and social previews were failing for some requests but not others. The symptoms were specific: “View Page Source” revealed an empty <head>, while DevTools showed the metadata tags correctly populated. The tags existed in the DOM but were absent from the initial HTML payload. We traced this to the introduction of streaming metadata in Next.js 15.2.

The async-first mindset has been spreading through React development for years – skeleton pages, lazy-loaded everything, streaming responses. The motivation makes sense on paper: faster Time to First Byte, better perceived performance. But when this approach reaches metadata – the one thing that absolutely needs to be in the initial HTML – the results are broken SEO, failed social previews, and accessibility violations.

This article examines why defaulting to async for everything is not a universal solution—it often masks underlying inefficiencies rather than solving them. When performance is the goal, backend optimization should be the first response, not streaming workarounds for critical headers.

The Mechanics of Streaming Metadata

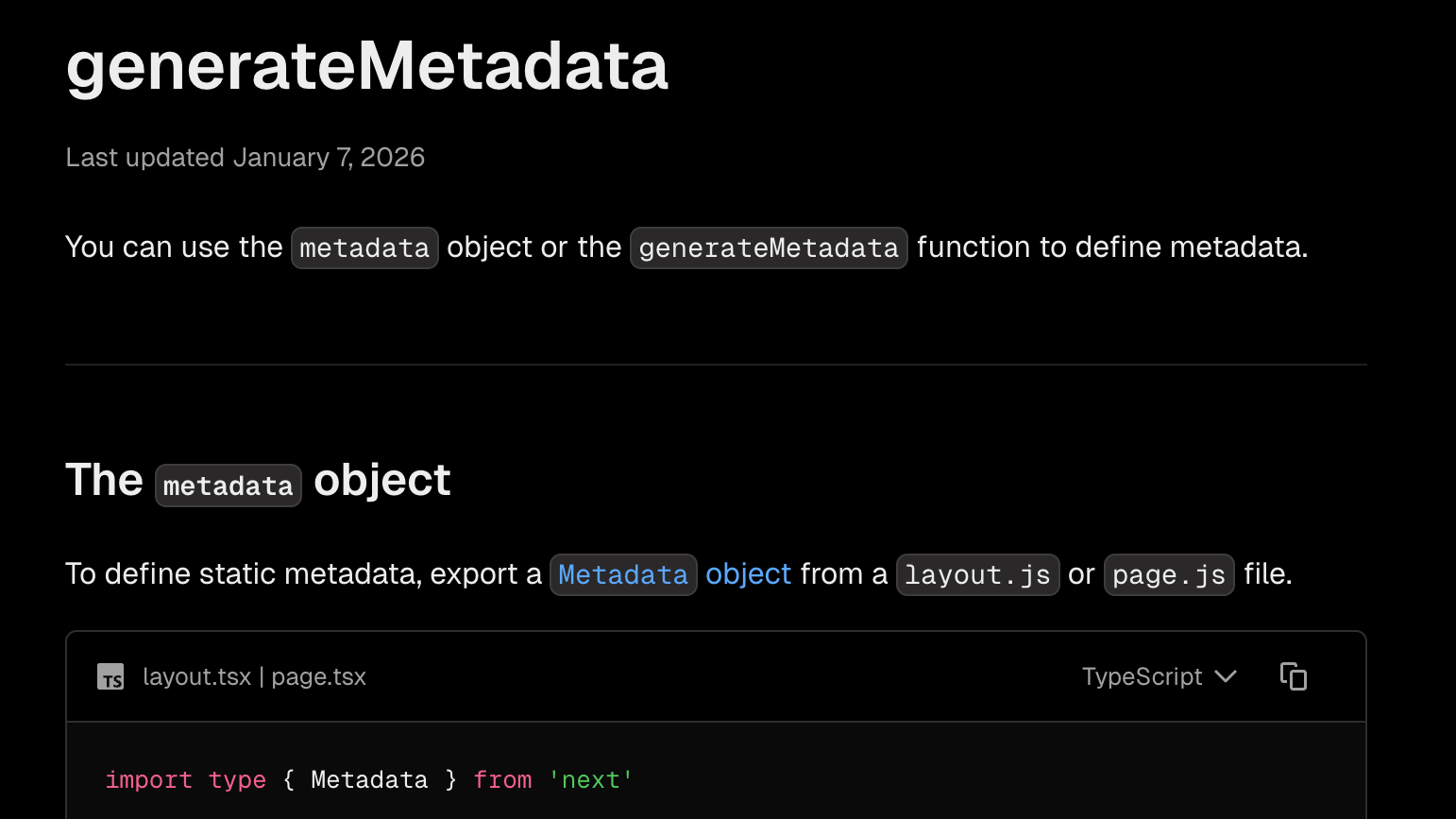

Prior to Next.js 15.2, generateMetadata was a blocking operation. The server awaited the resolution of the metadata promise, generated the <head>, and then flushed the initial HTML chunk.

// app/products/[id]/page.tsx

export async function generateMetadata({ params }) {

const { id } = await params

const product = await fetchProduct(id)

return {

title: product.name,

description: product.description,

openGraph: {

title: product.name,

description: product.description,

images: [product.image],

},

}

}In Next.js 15.2, the framework defaults to a streaming strategy. For standard browser requests, the server flushes the HTML shell immediately. Because generateMetadata is asynchronous, the <head> is closed and sent without the dynamic tags. When the promise resolves, the metadata is sent in a subsequent chunk and injected into the DOM post-hydration.

This creates a fundamental architectural conflict: the HTML specification requires metadata in the <head>, but once a chunk is flushed and the tag is closed, it cannot be reopened. Any subsequent metadata must technically reside in the <body> or be inserted via script, violating the expectations of many static parsers.

The Fragility of Bot Detection

Next.js attempts to mitigate SEO impact by detecting “HTML-limited” bots via User-Agent sniffing. If a match is found, it reverts to blocking behavior.

// next.config.js

module.exports = {

htmlLimitedBots: /Googlebot|Bingbot|Slackbot|Twitterbot|LinkedInBot|facebookexternalhit/i,

}This approach introduces several failure modes:

- Incomplete Lists: New crawlers and AI agents (e.g.,

GPTBot,ClaudeBot) emerge constantly. At EdgeComet, we maintain a list of more than 50 different user bots, agents, and patterns. Even with a comprehensive collection, we frequently find cases requiring updates. AI bots, in particular, often rotate their User-Agent strings without updating public documentation. We usually discover these changes only through deep log file analysis. If a bot isn’t in your specific regex, it receives the streamed version and misses your metadata. - Scraper Limitations: Most social media scrapers (WhatsApp, Discord, LinkedIn) are lightweight HTTP clients, not full browser engines. They parse the initial HTML chunk and rarely execute the JavaScript required to “find” streamed metadata.

- Caching Persistence: LinkedIn’s crawler, for instance, caches the initial fetch for days. If the first fetch fails to trigger the bot detection, the broken preview persists for a week.

Performance Analysis vs. Complexity

The justification for streaming metadata is the reduction of Time to First Byte (TTFB). However, we must quantify the actual latency being saved. I profiled generateMetadata execution on a production e-commerce application.

Results (N=1000):

- Average: 23ms

- p95: 47ms

- Max: 89ms

In this environment, streaming saves less than 50ms of blocking time. In exchange, it introduces significant architectural complexity and breaks strict HTML compliance.

If generateMetadata takes 500ms or more, streaming merely masks a backend inefficiency. The user sees a shell, but the “pop-in” of the title and browser UI creates a jarring experience that users often perceive as an unfinished or slow load.

Architectural Recommendations

Complexity should be a last resort. For most applications, metadata generation is a lightweight operation that does not warrant a streaming architecture.

1. Optimize the Data Source

If metadata generation is blocking your render, the root cause is likely an unoptimized query.

- Indexing: Ensure lookups by slug or ID are indexed.

- Caching: Implement Redis or in-memory caching for metadata.

- Denormalization: Store metadata in a read-optimized format during the write cycle.

2. Force Blocking Behavior

For applications where SEO and social reliability are paramount, I recommend opting out of streaming metadata by defining a catch-all bot regex.

// next.config.js

module.exports = {

htmlLimitedBots: /.*/,

}This restores the predictable behavior of sending a complete HTML document in the initial response.

3. Static Metadata

For deterministic pages, prefer export const metadata. Static metadata is baked into the page at build time and never streams, eliminating the runtime overhead entirely.

Conclusion

Streaming metadata is a sophisticated solution to a problem that is better solved through backend optimization. Page metadata is the primary API for search engines, social platforms, and accessibility tools. Treating it as secondary content to be streamed in later introduces unnecessary volatility.

The stability of the initial HTML payload should take precedence over marginal micro-optimizations in TTFB. Profile your application: if your metadata generation is fast, keep it blocking. If it is slow, fix the query.

SEO can feel like a distant concern for developers, something for marketing to handle, not engineering. But if organic traffic is your primary acquisition channel, technical SEO becomes a core business function. A migration that breaks crawlability leads to traffic drops, then revenue drops, then budget cuts. The industry has seen development teams downsized after React migrations tanked search visibility.